TDAM: Temporal Dynamic Appearance Modeling for Online Multi-person Tracking

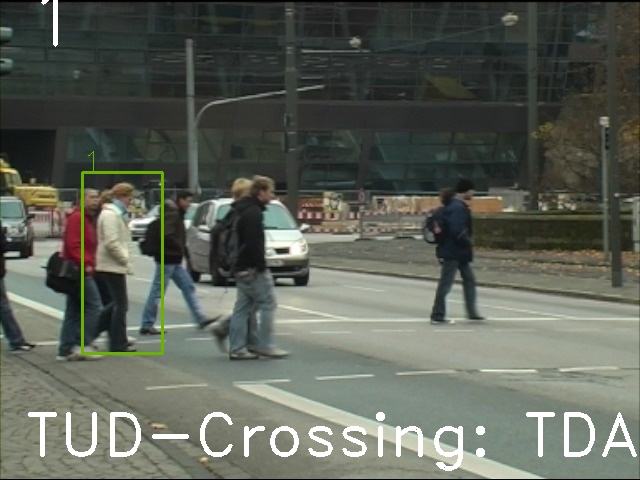

TUD-Crossing

Rendering of new sequences is currently deactivated due to heavy load.

Rendering of new sequences is currently deactivated due to heavy load.

Rendering of new sequences is currently deactivated due to heavy load.

Rendering of new sequences is currently deactivated due to heavy load.

Rendering of new sequences is currently deactivated due to heavy load.

Rendering of new sequences is currently deactivated due to heavy load.

Benchmark:

MOT15 |

Short name:

TDAM

Detector:

Public

Description:

Robust online multi-person tracking requires the correct associations of online detection responses with existing trajectories. We address this problem by developing a novel appearance modeling approach to provide accurate appearance affinities to guide data association. In contrast to most existing algorithms that only consider the spatial structure of human appearances, we exploit the temporal dynamic characteristics within temporal appearance sequences to discriminate different persons. The temporal dynamic makes a sufficient complement to the spatial structure of varying appearances in the feature space, which significantly improves the affinity measurement between trajectories and detections. We propose a feature selection algorithm to describe the appearance variations with mid-level semantic features, and demonstrate its usefulness in terms of temporal dynamic appearance modeling. Moreover, the appearance model is learned incrementally by alternatively evaluating newly-observed appearances and adjusting the model parameters to be suitable for online tracking. Reliable tracking of multiple persons in complex scenes is achieved by incorporating the learned model into an online tracking-by-detection framework. Our experiments on the challenging benchmark MOTChallenge 2015 demonstrate that our method outperforms the state-of-the-art multi-person tracking algorithms.

Reference:

M. Yang, Y. Jia. Temporal dynamic appearance modeling for online multi-person tracking. In Computer Vision and Image Understanding, 2016.

Last submitted:

August 26, 2015 (9 years ago)

Published:

April 21, 2015 at 11:05:50 CET

Submissions:

4

Project page / code:

n/a

Open source:

No

Hardware:

3.4 GHZ, 1 Core

Runtime:

5.9 Hz

Benchmark performance:

| Sequence | MOTA | IDF1 | HOTA | MT | ML | FP | FN | Rcll | Prcn | AssA | DetA | AssRe | AssPr | DetRe | DetPr | LocA | FAF | ID Sw. | Frag |

| MOT15 | 33.0 | 46.1 | 34.1 | 96 (13.3) | 282 (39.1) | 10,064 | 30,617 | 50.2 | 75.4 | 35.6 | 33.0 | 38.5 | 70.8 | 38.7 | 58.2 | 76.5 | 1.7 | 464 (9.2) | 1,506 (30.0) |

Detailed performance:

| Sequence | MOTA | IDF1 | HOTA | MT | ML | FP | FN | Rcll | Prcn | AssA | DetA | AssRe | AssPr | DetRe | DetPr | LocA | FAF | ID Sw. | Frag |

| ADL-Rundle-1 | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| ADL-Rundle-3 | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| AVG-TownCentre | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| ETH-Crossing | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| ETH-Jelmoli | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| ETH-Linthescher | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

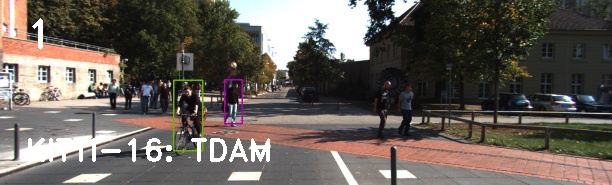

| KITTI-16 | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| KITTI-19 | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| PETS09-S2L2 | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| TUD-Crossing | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

| Venice-1 | 0.0 | 0.0 | 0.0 | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0 | 0 |

Raw data: